AI and the paperclip problem

$ 19.99 · 4.5 (333) · In stock

Philosophers have speculated that an AI tasked with a task such as creating paperclips might cause an apocalypse by learning to divert ever-increasing resources to the task, and then learning how to resist our attempts to turn it off. But this column argues that, to do this, the paperclip-making AI would need to create another AI that could acquire power both over humans and over itself, and so it would self-regulate to prevent this outcome. Humans who create AIs with the goal of acquiring power may be a greater existential threat.

Watson - What the Daily WTF?

Is AI Our Future Enemy? Risks & Opportunities (Part 1)

BUSF SHU 366 Fintech Syllabus, PDF, Title Ix

AI Paperclips

Are You a Divergent Thinker? Take This Simple Paper Clip Test to Find Out

What is the paper clip problem? - Quora

Making Ethical AI and Avoiding the Paperclip Maximizer Problem

Meta's AI leaders want you to know fears over AI existential risk are ridiculous

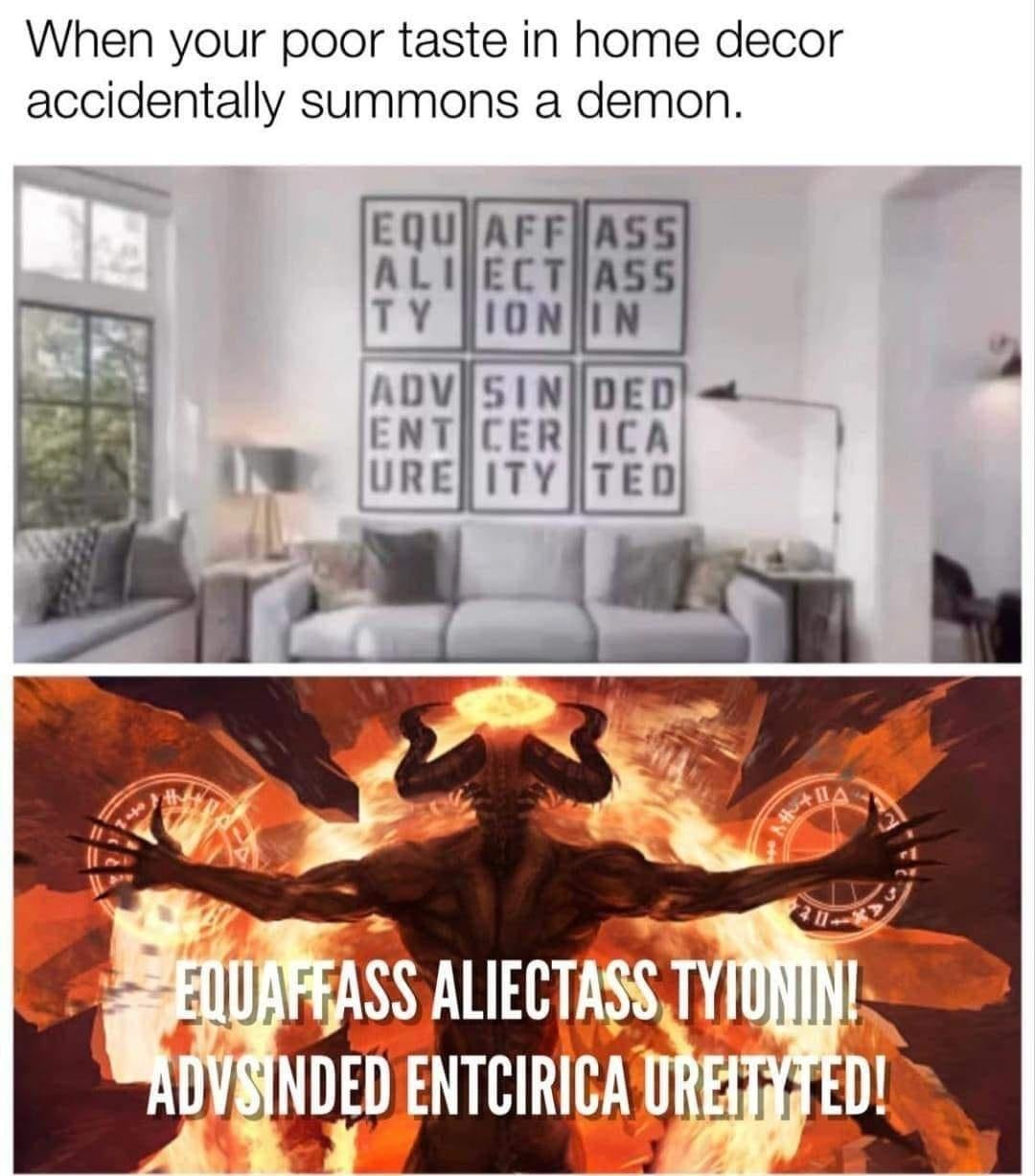

Elon Musk Totally Understands Capitalism : r/LateStageCapitalism

Capitalism is a Paperclip Maximizer